You can now access AI video generation directly within the Envato experience

Envato's AI video generator just moved into the Envato experience. Faster, more reliable generations and a smoother path from idea to finished clip — all in one place.

Envato: Get every type of asset for any type of project, and access to AI tools. Start now

Create AI video consistent character workflows for stable identity across scenes.

AI video generators such as Envato VideoGen are powerful tools. They can build complete scenes from a single AI video prompt, including fully formed characters. But achieving an AI video consistent character across multiple clips is where things become challenging. A single generation might look impressive on its own, yet maintaining the same identity from scene to scene requires a more intentional AI video workflow.

The biggest issue with creating an AI video with a consistent character is that it becomes hard to reuse that character across different environments. Because each generation is created separately, the system does not automatically preserve identity. Even when you reuse similar prompts, small variations in facial structure, clothing, or style can creep in from one clip to the next.

Creating an AI video with a consistent AI character design workflow is challenging because AI models don’t truly “remember” identity from one generation to the next. Each clip is produced independently. Unless you provide a stable visual reference, the system reinterprets your written description every time.

Even small reinterpretations can cause noticeable shifts, such as:

These micro-variations may seem minor in isolation, but across multiple scenes they quickly break the illusion of a single, continuous character.

Animators solved this problem long before generative AI existed. Instead of redrawing a character from memory each time, they relied on structured reference sheets to lock in proportions, features, and styling. This same foundation is essential when building an AI video consistent character workflow.

The same principle applies here. By creating a master version of your character, generating a few supporting views, and reusing that reference across multiple video scenes, you introduce stability into your process. When your AI video consistent character is anchored to a defined visual source, maintaining identity becomes far more controlled, predictable, and repeatable.

In animation, characters are carefully defined before they ever appear across multiple scenes. Artists create detailed visual references to ensure the character’s proportions, features, and styling remain consistent. This same foundation is crucial when building an AI video consistent character workflow.

These reference sheets usually include standard angles — front, side, and back views — so every structural detail stays locked in. They act as a visual anchor. When the character appears in different shots, lighting conditions, or environments, the core design remains stable. Applying this approach to AI video makes it far easier to maintain a consistent character across multiple generated scenes.

Character sheets remove ambiguity and provide a reference to follow. As the character moves between scenes, poses, or different lighting setups, the core design stays the same.

In studio environments, this is especially important. Multiple artists may animate the same character across different shots. The character sheet ensures that no matter who is working on the scene, the character’s identity and design stay consistent.

The same principle applies when generating AI video scenes, especially if your goal is an AI video consistent character. If you prompt a character from scratch every time, small variations are almost guaranteed. However, when you work from a master reference, such as a structured character sheet, you dramatically reduce inconsistency and gain far more creative control.

Instead of generating a fresh interpretation for each new scene, you anchor future outputs to a defined visual source. This structured approach makes maintaining an AI video consistent character far more stable, predictable, and production-ready.

Before starting, it helps to understand the simple workflow behind building an AI video consistent character. We’ll use three tools in a clear, structured sequence, each with a specific role in stabilizing identity across scenes. The goal is to create one reliable character reference sheet and reuse it across multiple video environments so your AI video consistent character remains visually stable and recognizable from clip to clip.

To maintain character stability across multiple scenes, you’ll use a simple three-step tool workflow. Each tool plays a specific role in creating, refining, and reusing your character, keeping your identity consistent from one video to the next.

By the end of the process, you’ll have:

Everything starts with a single, clean reference image. No distractions, just something that is clear and neutral. This will become the foundation for the design of your character, so it’s worth taking a few extra minutes to get it right.

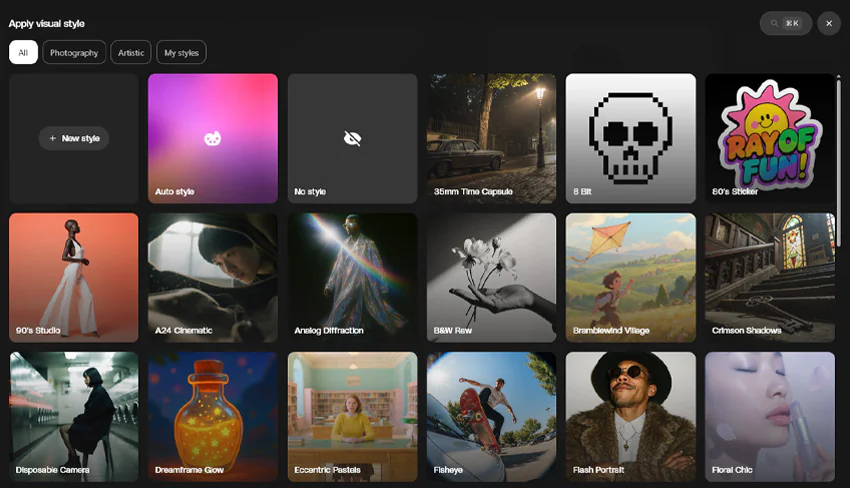

ImageGen lets you choose an initial style to guide your character’s visual direction. You can either:

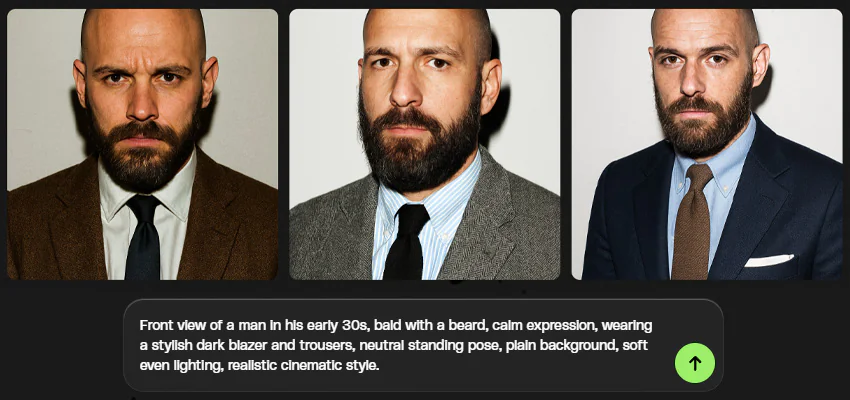

For your master character, keep things straightforward. Avoid dramatic lighting, motion, or backgrounds. A neutral setup makes it easier to reuse the character later. For the prompt, we want to establish a few core things. Here’s a simple prompt formula that you can use to do this:

[View] + [Age / gender] + [Key physical traits] + [Clothing] + [Pose] + [Background] + [Lighting] + [Style]

Here’s an example of how you can use this AI image prompt formula to create a prompt for your character:

Front view of a man in his early 30s, bald with a beard, calm expression, wearing a stylish dark blazer and trousers, neutral standing pose, plain background, soft even lighting, realistic cinematic style.

Now that you have a strong master image, the next step is to expand it into additional angles. This is where you begin turning a single character image into a usable reference.

Open ImageEdit and upload your master character into Nano Banana. Instead of describing the character from scratch again, you’ll use the existing image as the foundation and instruct the tool to generate new views based on it. The most important ones we want to use are:

Here’s an example prompt of how you can ask Nano Banana to create new views for your character:

Generate a clean side view of the same character. Keep the same style, outfit, and proportions. Plain background.

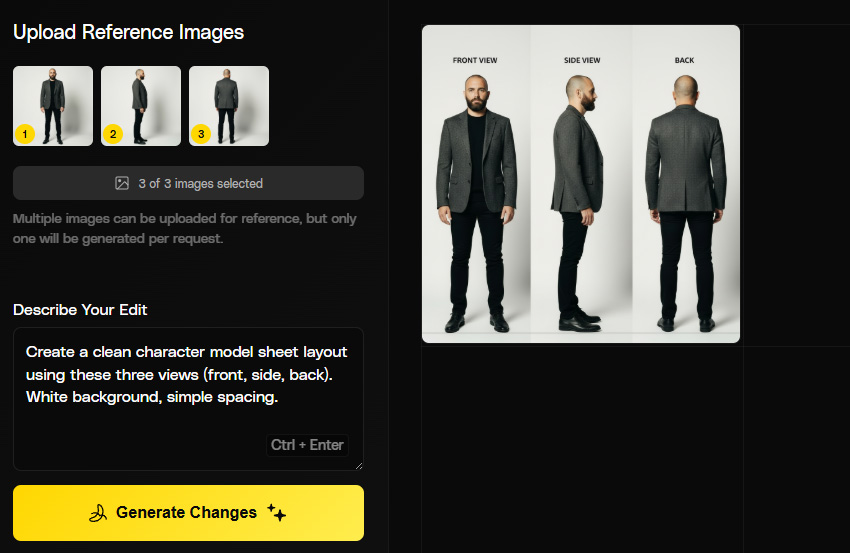

At this stage, you’ll have three separate images showing different angles of the same character. From here, we’ll be placing them together in a single frame, which allows us to see the design as a whole.

This combined image will serve as your reference going forward. When generating new scenes later, you’re no longer relying only on a written description as you have a defined visual version of the character to guide the process.

Upload your three images into Nano Banana and prompt it to organise them into a structured layout. Keep the design minimal. A plain background and even spacing are all you need.

For example:

Create a clean character model sheet layout using these three views (front, side, back). White background, simple spacing.

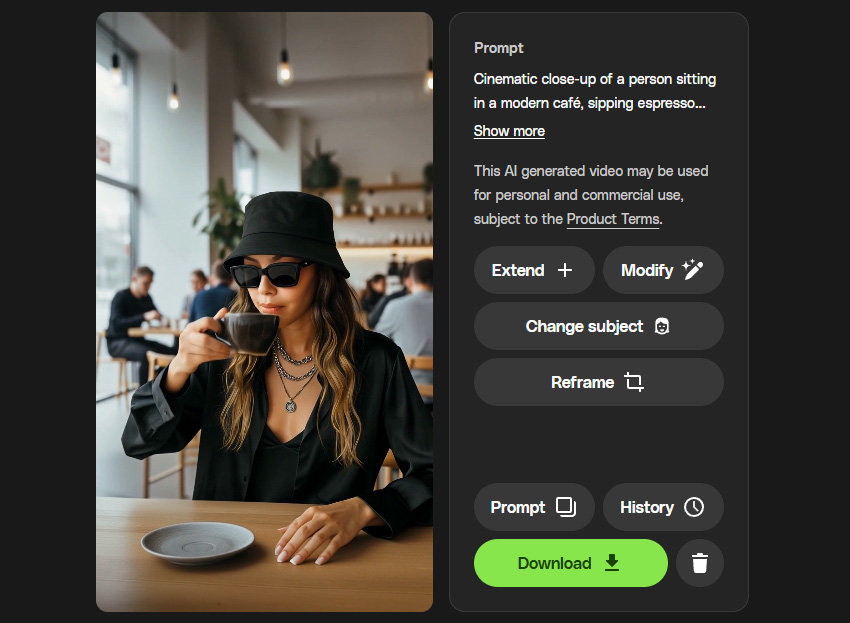

With your character reference prepared, you can now shift focus to the environment. Open VideoGen and generate the scene itself first, without trying to control the character at this stage.

For now, generate the scene itself. Describe the space, lighting, and framing, and avoid specifying the character in detail. The subject in this version is temporary and will be replaced later.

Cinematic close-up of a person sitting in a modern café, sipping espresso from a black cup. Soft natural window light, shallow depth of field, warm tones, subtle background blur with people chatting behind. Calm, contemplative mood, handheld camera with slight natural movement.

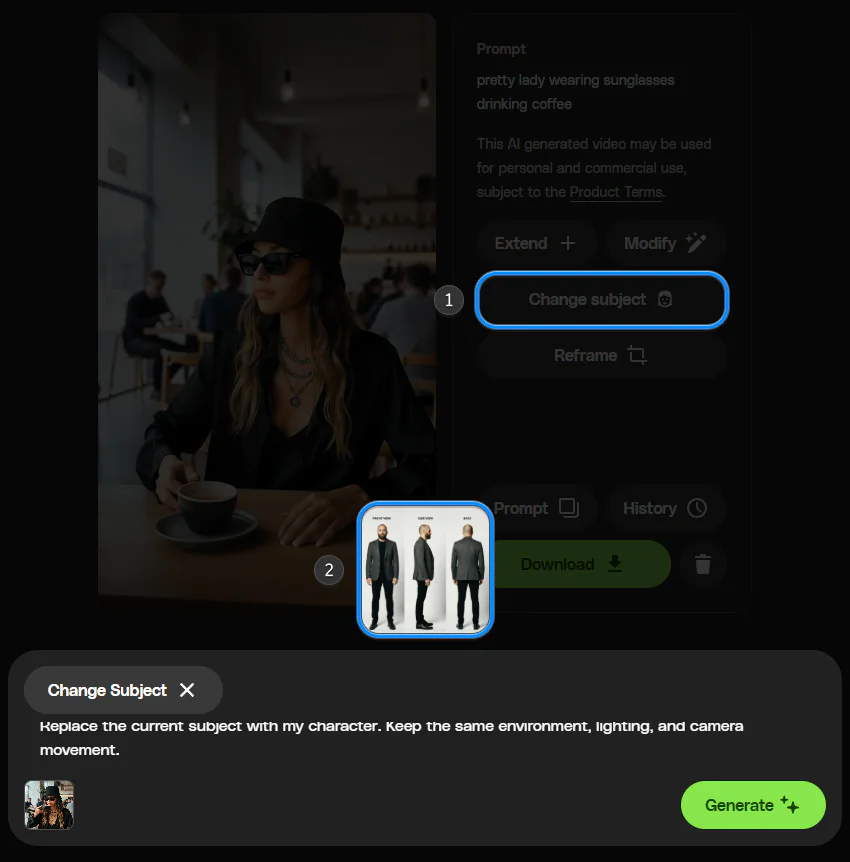

Once your scene is generated, you can replace the default subject with your own character. This is where your earlier preparation comes in handy.

In VideoGen, use the Change Subject button and upload your master character image (or your model sheet if it works better for your project).

Instead of rewriting the entire scene prompt, keep the instruction short and focused on replacement.

For example:

Replace the current subject with my character. Keep the same environment, lighting, and camera movement.

Once you’ve successfully swapped your character into one scene, the process becomes repeatable.

Instead of generating a new version of the character each time, you return to the same character reference sheet and place it into different environments.

Consistency issues sometimes come from the starting image itself. Lighting, angle, and expression can all influence how stable the character appears in later scenes. Let’s check out a few of them here and learn how to address them.

If facial structure shifts between generations, the master image may not be neutral or clear enough. Strong shadows, angled poses, or dramatic expressions can introduce variation.

Use a clean, front-facing image with even lighting as your primary reference. When swapping into VideoGen, keep the replacement instruction simple and avoid adding new facial details.

Outfits can drift when they aren’t clearly defined. Small wording differences in prompts may lead the system to reinterpret the character’s styling.

Make sure the clothing description remains consistent across your master image. When using Change Subject, reference the same outfit consistently rather than rephrasing it.

If one scene appears more realistic and another more stylised, the issue might come from mixing style cues across prompts. Keep your visual style consistent and avoid combining conflicting descriptors

Facial features can look different under certain lighting or from unusual camera angles. Strong colour effects or extreme perspectives tend to make those differences more noticeable.

If this happens, simplify the scene setup. Neutral camera angles and balanced lighting tend to preserve identity more reliably.

A consistent character becomes significantly more useful once you move beyond single, standalone clips. When the design remains stable across scenes, you can build continuity rather than relying on isolated generations. This workflow is particularly effective in situations where repeatability and recognition matter.

Some of the most practical use cases include:

Creating consistent AI characters does not require complex tools. It simply requires a clear reference and a repeatable process. By defining your character once, generating supporting views, and reusing that reference inside VideoGen, you introduce stability into your scenes.

So try this workflow with your own character and experiment with placing them in different environments to see how consistent your results can become!

Not always. A character sheet isn’t required for every situation. For a one-off clip, a single image may be enough. It becomes more relevant when the same character appears repeatedly across different scenes.

Minor variation can still happen. If that occurs, review your master image first. Neutral lighting, a clear pose, and minimal background distractions tend to produce more stable results. Keeping your replacement instructions short and consistent also helps.

Yes. The same approach applies whether your character is realistic, illustrated, or stylised. The key is creating a clear reference first and reusing it consistently rather than regenerating the character from scratch.

Yes. In many cases, a clean front-facing master image is enough to maintain stability when using Change Subject. The additional side and back views simply provide extra clarity and help reduce drift in more complex scenes.

No. When using Change Subject, it’s usually better to focus only on replacing the subject while keeping the environment and camera setup unchanged. Adding too many new descriptors can introduce variation.

No. This workflow is designed for generative AI tools and rapid content creation. It helps improve consistency within that context, but it is not intended to replace full animation production pipelines.

Envato's AI video generator just moved into the Envato experience. Faster, more reliable generations and a smoother path from idea to finished clip — all in one place.

Create an ad with AI using Envato’s tools to generate visuals, animate video, add music, and build polished campaigns fast.

This AI image generator guide will help you create high-quality visuals fast with smart styles, quick edits, and seamless creative workflows.

Learn what an AI image editor can do, and how to create polished visuals faster with AI.