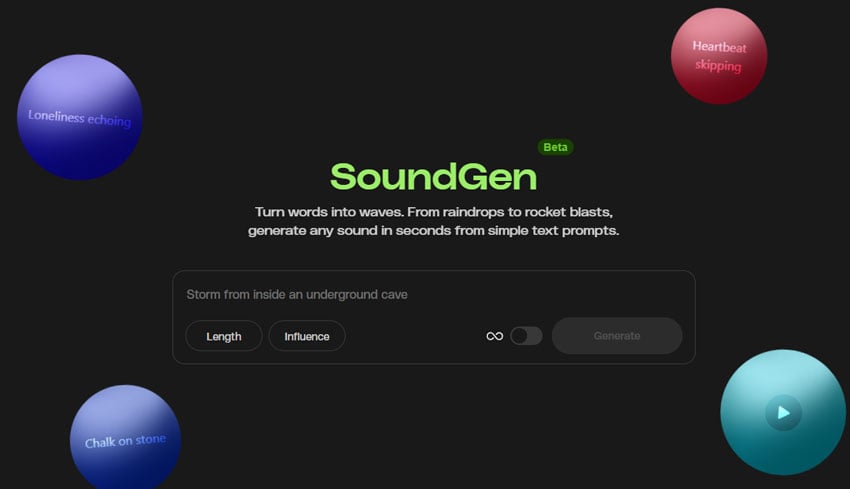

Bigger scenes, better physics: Seedance 2.0 just landed on Envato

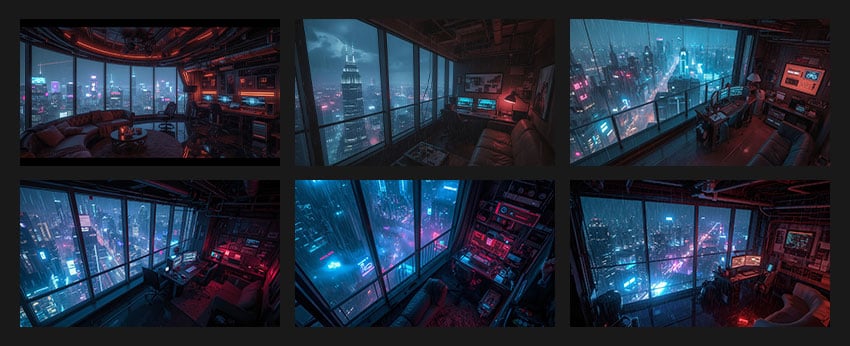

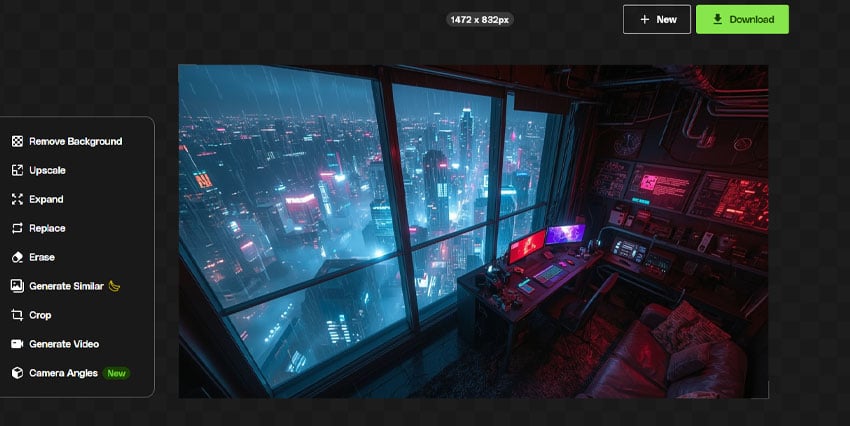

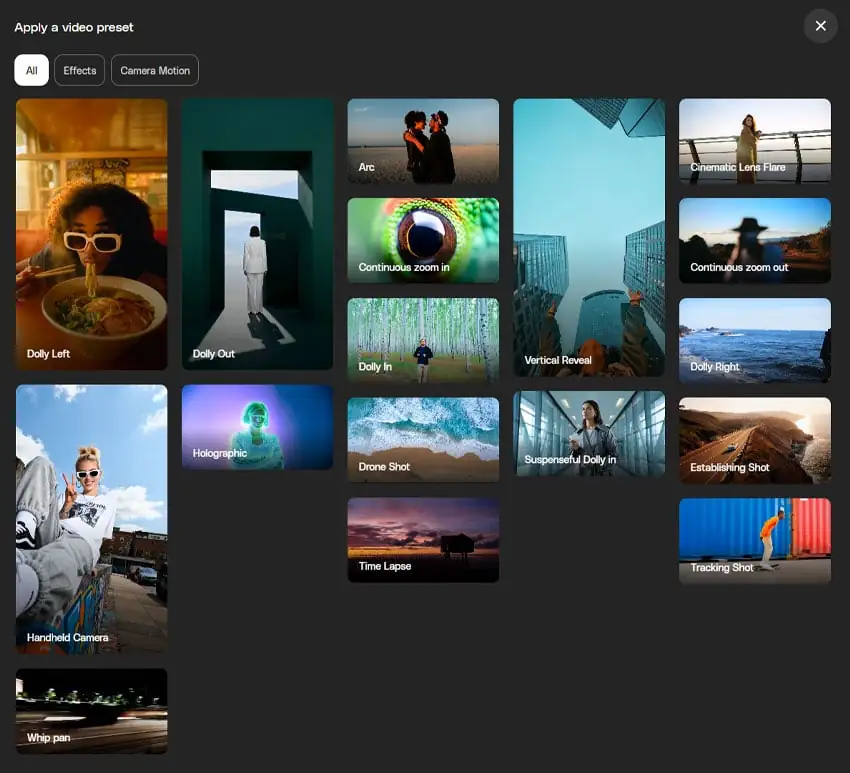

Seedance 2.0 — ByteDance's AI video model known for its physical realism and scene complexity — is now part of the model lineup behind Envato's AI video generator. Here's what changes.